Meta description: Learn how to spot a fake subscriber count, calculate audience authenticity, and build a practical vetting workflow that protects influencer marketing budget from fraud.

Recommended URL slug: /fake-subscriber-count

{

"@context": "https://schema.org",

"@type": "BlogPosting",

"headline": "Fake Subscriber Count: A 2026 Brand Guide to Vetting",

"description": "Learn how to spot a fake subscriber count, calculate audience authenticity, and build a practical vetting workflow that protects influencer marketing budget from fraud.",

"url": "https://reach-influencers.com/fake-subscriber-count",

"author": {

"@type": "Organization",

"name": "REACH"

},

"publisher": {

"@type": "Organization",

"name": "REACH",

"url": "https://reach-influencers.com"

},

"mainEntityOfPage": "https://reach-influencers.com/fake-subscriber-count"

}

A brand manager signs off on a creator partnership because the channel looks safe. Big subscriber base. Clean profile. Impressive top-line numbers.

Then the campaign goes live and the problems show up fast. Views land weak. Comments feel thin. Traffic quality disappoints. The creator looked large, but the audience was not there in a way that could move product.

This is the core issue behind a fake subscriber count. It is not just a vanity metric problem. It is a budget protection problem. If your team buys reach that does not exist, every downstream metric gets distorted, from expected awareness to conversion analysis.

Many marketers do not need another generic warning to “watch for bots.” They need a repeatable way to verify whether subscriber counts reflect a real, reachable audience. That means checking signals by hand first, then validating them with ratios and consistency checks before a contract gets signed.

The High Cost of Empty Numbers in Influencer Marketing

A fake subscriber count creates two types of damage at once.

The first is obvious. You pay for an audience that does not show up. The second is harder to catch. Your team makes the wrong planning decisions because the creator’s size looked stronger than it really was.

Why inflated audiences break campaign planning

When a channel looks bigger than its actual active audience, every forecast starts from a false assumption.

Your content calendar gets built around reach that may never materialize. Your paid amplification plan may be set too low because you expected strong organic lift. Your internal stakeholders may compare future creators against a bad benchmark and reject smaller, healthier partners who would have performed better.

That is why a fake subscriber count should be treated like a risk variable, not a creator quirk.

A weak partnership can create problems such as:

- Mispriced partnerships: You pay premium rates for audience volume that is largely inactive, low-quality, or artificial.

- Bad attribution decisions: Teams conclude that influencer marketing “did not work” when the actual issue was poor vetting.

- Reputation drag: Customers notice when comment sections look fake, empty, or disconnected from the creator’s content.

- Reporting noise: Inflated subscriber counts make post-campaign analysis harder because the channel looked healthier on paper than it was in practice.

The cost is usually hidden before launch

Most fake-audience problems do not reveal themselves during discovery.

They hide in plain sight because subscriber count is easy to scan and easy to overvalue. Junior teams often compare creators by audience size first, then only glance at content quality. That sequence is backwards. The audience has to be verified before the subscriber total has any planning value.

Tip: Treat subscriber count as a headline, not proof. The proof comes from audience behavior, consistency, and ratio analysis.

In practice, the worst creator choices are often not obvious scammers. They are channels with enough real signals to look credible at a glance, but enough inflation to distort expected results. That trade-off is what makes vetting important. You are not looking for perfection. You are looking for evidence that the audience is active, proportional, and commercially useful.

What works and what does not

A lot of teams still rely on surface checks.

That does not work well on its own.

Here is the difference:

| Approach | What happens |

|---|---|

| Choosing by subscriber total | You reward the easiest number to manipulate |

| Reading only a few top comments | You miss inconsistency across recent content |

| Checking one viral video | You mistake outlier performance for channel health |

| Reviewing recent videos and ratios together | You get a realistic picture of actual audience quality |

The good news is that fake subscriber count problems leave patterns. Some are visible without tools. Others show up clearly once you calculate a few core ratios. Both matter.

The Anatomy of a Fake Subscriber Count

A fake subscriber count is not one single thing. It usually comes from a mix of low-quality accounts, inactive purchased audiences, and manipulation tactics meant to make a creator look larger or safer than they are.

Not all fake subscribers behave the same way

Some fake accounts are straightforward bots. They follow channels but never engage in a believable way.

Others come from click farms or purchased subscriber packages. These accounts may be real profiles in a technical sense, but they are not following out of interest. They are part of an artificial growth tactic.

A third bucket comes from Sub4Sub activity. Creators trade subscriptions to inflate numbers. The count rises, but the audience has no real intent to watch, comment, or buy.

That distinction matters because each source leaves different signals behind:

- Bots often produce almost no meaningful interaction.

- Purchased accounts can create inflated volume with poor watch behavior.

- Sub4Sub audiences may be technically real people but still function like fake reach because they do not behave like true fans.

Why creators inflate subscriber counts

The motivation is usually commercial.

A larger audience can help a creator look more credible to brands, appear more competitive in search and platform rankings, or push them toward platform milestones. The pressure is strongest where follower size gets treated as a shortcut for trust.

That is why fake subscriber count issues show up across creator tiers, not just with small accounts trying to look bigger.

A useful example comes from Gleemo’s fake subscriber analysis of MrBeast’s YouTube channel, which reported 70.5 million doubtful followers out of 399.5 million total, or about 17.7% fake subscribers. The point is not that every doubtful follower reflects intentional fraud by the creator. The point is that audience authenticity is messy at scale, and brands cannot assume that very large channels are automatically clean.

What brands often misunderstand

Many teams think fake subscribers only matter if the count is extreme.

That is the wrong lens. Even moderate inflation can break planning if your team prices the creator on headline scale and ignores audience quality.

A fake subscriber count usually changes three things:

Reach expectations get inflated

The brand expects a larger active audience than the creator can consistently deliver.Engagement context gets distorted

A channel may still receive comments and likes, but not in proportion to the subscriber base.Audience fit becomes harder to trust

If the top-line number is manipulated, the rest of the audience story deserves closer review.

Key takeaway: A fake subscriber count is rarely just a vanity problem. It changes how you value the partnership, forecast performance, and explain outcomes internally.

The practical takeaway

Do not think of fake subscribers as a yes-or-no issue.

Think of them as an authenticity gap. The larger the gap between subscriber total and actual audience behavior, the less useful that channel is for a brand campaign. Some creators have a small gap and remain viable. Others look large but have so little active audience behind the count that they become a poor commercial bet.

That is why careful vetting has to focus on patterns, not just labels.

How to Spot a Fake Subscriber Count Without Any Tools

You can catch a surprising number of fake subscriber count problems with a manual review.

Start with what the public profile already gives you. Recent videos. Comment quality. Posting rhythm. Whether engagement looks stable or random. These signals are not perfect, but they are strong enough to screen creators before you spend time on deeper analysis.

Check the last batch, not the best post

Do not start with the creator’s most popular content.

Open the most recent set of uploads and look for consistency. The strongest manual check is usually the last 10-15 recent videos, because erratic engagement across that range is a known red flag in fake subscriber analysis from the verified data provided earlier.

Look for patterns like these:

- One breakout hit surrounded by weak recent uploads: That may be normal. It may also mean the channel is being sold on an old peak.

- Huge subscriber count with thin view activity: That often points to inactive or artificial audience inflation.

- Sharp swings with no content explanation: If one ordinary upload performs far above or below similar posts, inspect further.

Read comments like an investigator

The comment section is one of the fastest ways to spot whether a fake subscriber count is supported by real audience behavior.

Good comments usually reference the content itself. They mention a moment in the video, ask a specific follow-up question, or continue an inside joke that fits the creator’s community.

Bad comments usually look detached from the content.

Watch for things like:

- Generic praise: “Nice content,” “Amazing,” or “Great post” repeated across uploads.

- Gibberish or awkward phrasing: A known red flag from fake subscriber audits.

- Profiles that look empty: Little posting activity, weak profile identity, or obvious spam behavior.

- Comment mismatch: A supposedly local creator with audience chatter that does not fit the claimed market.

If the creator tells brands they have a strong audience in one market, but the visible interactions suggest something very different, pause there.

Look for growth that does not match the content

Abrupt audience jumps are one of the clearest warning signs. Gleemo’s breakdown of fake YouTube subscriber patterns notes that sudden subscriber growth spikes without corresponding viral content or external triggers can indicate bulk purchases or Sub4Sub activity.

Organic growth usually has a story behind it. A collaboration. A breakout topic. A high-performing video series. A mention from a larger creator.

Artificial growth often looks disconnected from anything visible on the channel.

Ask simple questions:

- Did a major upload happen right before the jump?

- Was there a collaboration or external promotion?

- Did the views rise with the subscriber gain, or did only the subscriber count move?

Tip: If you cannot explain a sharp jump by looking at the content itself, treat the audience as unverified until deeper review confirms it.

A good companion check is to compare your observations against practical benchmarks for what a good engagement rate looks like. Even before you calculate formal ratios, you can often tell whether audience interaction feels proportional.

Manual review checklist for YouTube and Instagram

Different platforms show different clues, but the logic stays the same.

On YouTube

- Recent uploads first: Review recent videos before top performers.

- Comment relevance: Check whether viewers refer to actual moments in the video.

- View consistency: See whether ordinary uploads get ordinary, stable response.

- Audience match: If the creator claims one audience profile, verify that visible interaction aligns.

On Instagram

- Caption-comment fit: Real comments respond to the image, story, or caption.

- Like-comment mismatch: Heavy follower counts with thin discussion can signal weak audience quality.

- Story-driven communities: Creators with real communities often have recognizable repeat commenters.

- Low-effort repetition: Recycled spam comments are an obvious warning sign.

This short explainer is useful if you want to train a team member on visual red flags before they start outreach.

What manual reviews cannot do

Manual vetting is a filter, not a final verdict.

A channel can look normal and still hide a fake subscriber count problem if the manipulation is subtle. That is why manual checks should decide whether a creator deserves deeper analysis, not whether they are fully approved.

Use them to eliminate obvious risks quickly. Then move to the numbers.

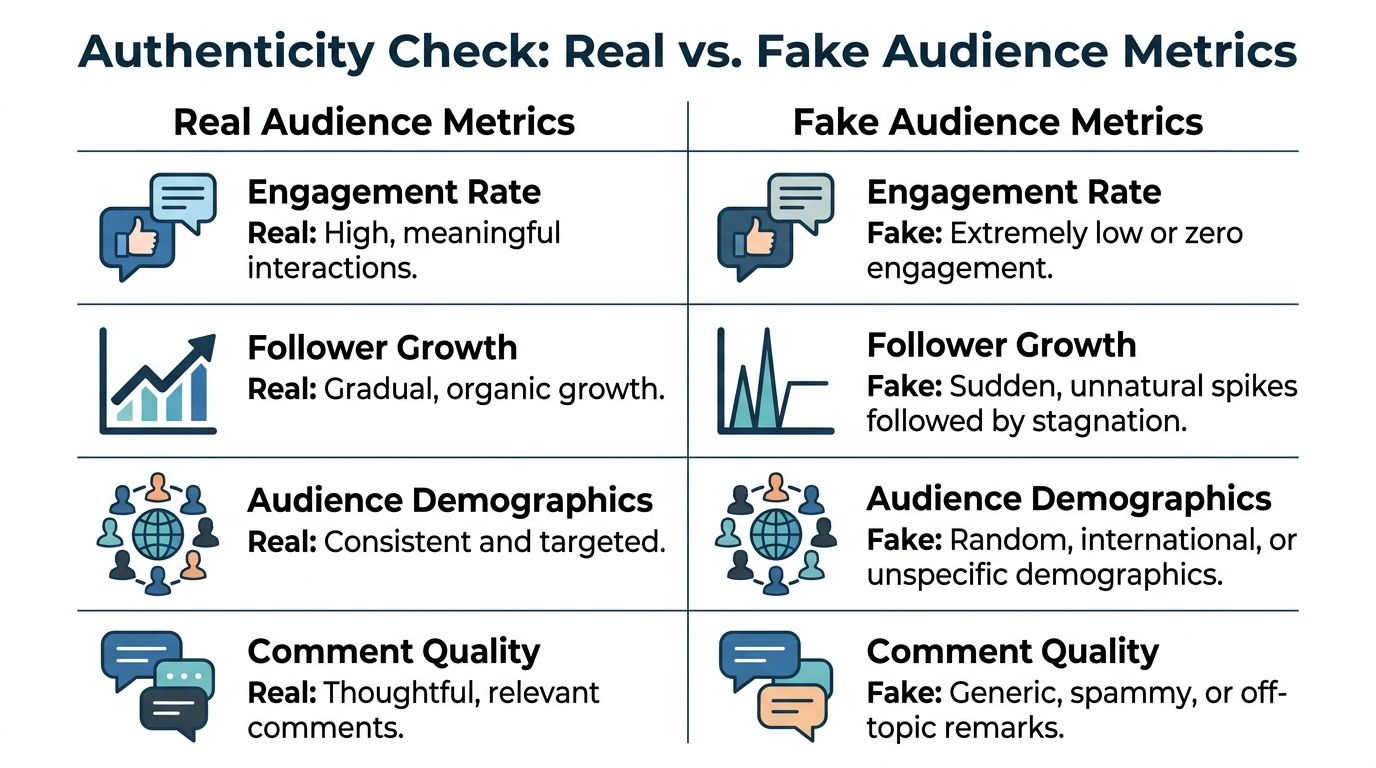

Using Data to Verify Audience Authenticity

A creator can look credible in a manual review and still waste your budget.

That usually happens when teams approve on surface signals, then skip the ratio check. A channel with a large subscriber count can still deliver very little active reach. The fix is simple. Measure whether recent audience behavior is proportionate to the size being sold.

Start with the view-to-subscriber ratio

For YouTube, the first number I check is the view-to-subscriber ratio. It compares average views on recent uploads to total subscribers, which gives you a fast read on how much of that subscriber base is still active.

According to Scrumball’s YouTube fake subscriber benchmark, legitimate YouTube channels typically show a view-to-subscriber ratio of 5-15%. The same source uses a useful example: a channel with 100,000 subscribers averaging only 1,000-2,000 views per video sits far below that benchmark.

Use this formula:

View-to-subscriber ratio = average views on recent uploads ÷ total subscribers

That number works best when you calculate it on recent uploads only. Skip lifetime channel averages. Remove obvious outliers if one video spiked for a clear reason, such as news relevance, a collaboration, or paid distribution. Then look for the underlying pattern across multiple uploads.

Measure engagement against views

Subscriber-based engagement often flatters weak channels because subscribers are an accumulated total, not a current audience. A more useful benchmark is engagement based on views.

The same Scrumball benchmark states that authentic engagement is further supported by consistent 3-8% engagement rates based on views.

Use this formula:

Engagement by views = likes + comments + shares, divided by views

This ratio answers the question a media buyer cares about. When people are exposed to the content, do they respond like real viewers with genuine interest?

Use the metrics together

No single ratio should approve or reject a creator on its own. View-to-subscriber ratio, engagement by views, and performance consistency each capture a different part of audience quality.

A practical review looks like this:

| Metric | Healthy signal | Warning signal |

|---|---|---|

| View-to-subscriber ratio | Falls within the typical 5-15% benchmark from Scrumball | Consistently below that range |

| Engagement by views | Stays within the typical 3-8% range from Scrumball | Repeatedly weak relative to views |

| Performance pattern | Stable and explainable | Erratic without a content reason |

Here is the workflow I use with junior buyers. Pull the last 8 to 12 uploads. Calculate average views. Divide by subscribers. Then calculate engagement by views on the same content set. If both ratios are weak, review posting cadence, format changes, and any recent shifts in content direction before making a decision. REACH shortens that process by centralizing these checks, so your team is not rebuilding the same spreadsheet for every shortlist.

A simple interpretation framework

The goal is not to hunt for a single magic cutoff. The goal is to estimate whether the active audience is large enough, real enough, and consistent enough to justify the fee.

Use a four-part review:

Ratio check

Does recent view volume make sense relative to subscriber count?Engagement check

Do viewers interact at a level that fits a real, interested audience?Consistency check

Are recent uploads performing within a believable range?Audience fit check

Does this creator appear to reach the market you need?

If you want to standardize that process across a team, a structured fake follower checker workflow helps because it keeps the same review logic across every creator and lets REACH automate the repetitive screening work.

What works in practice

A weak ratio is a prompt to investigate, not a verdict by itself.

Some channels underperform because they changed formats, paused posting, or built a subscriber base around a topic they no longer cover. Those cases can still be workable if recent videos show stable recovery and the audience quality is strong. But if low ratios are paired with thin engagement and inconsistent performance, you are usually looking at inflated audience value.

That is the standard to keep in mind. Size gets attention. Active audience quality earns budget.

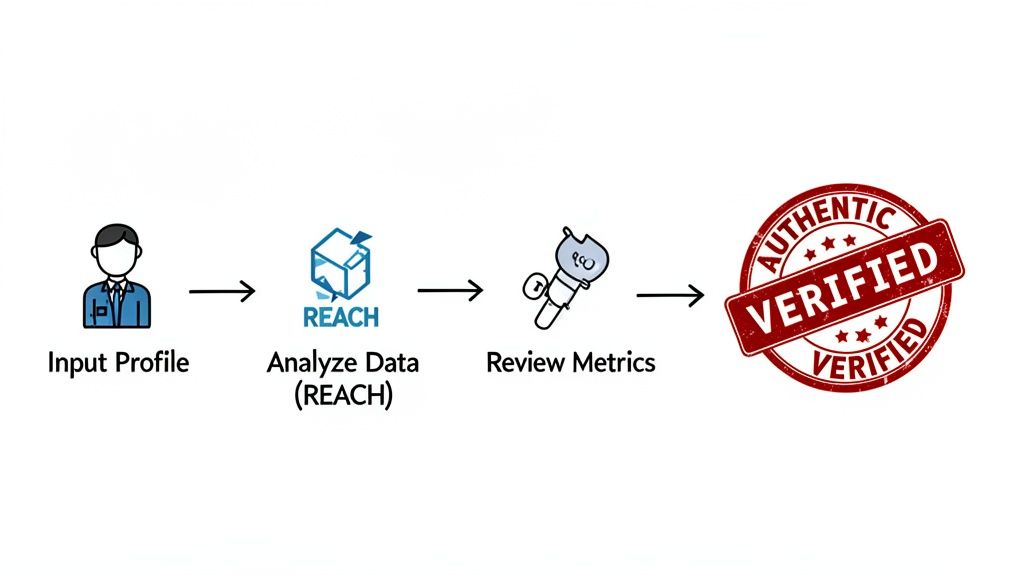

Your Fraud-Proof Vetting Workflow with REACH

A shortlist looks safe until procurement asks why three approved creators delivered weak reach last quarter, and no one can show the review logic behind the approvals.

That failure usually starts with process, not intent. The team reviewed creators in different ways, weighted different signals, and approved based on individual judgment instead of a shared standard. Fake subscriber count risk gets through when vetting is loose.

REACH solves that by turning creator review into a repeatable workflow. The goal is simple. Score the same signals the same way, document the decision, and keep the evidence in one place.

Step one, run a fast pass before anyone discusses rate

Start with elimination, not negotiation.

Review the public profile, the last several uploads, and the visible interaction quality. Use the ratio work from the previous section, then add a basic credibility screen. If the channel shows weak view-to-subscriber alignment, thin comments, erratic performance, and growth that has no content explanation, it does not belong in the paid shortlist.

Use three quick checks:

- Does recent performance support the claimed audience size?

- Do comments and interactions look human and relevant to the content?

- Is there a believable reason for the channel’s current scale and momentum?

If the creator fails this pass, stop the review there. That saves analyst time and keeps weak options from gaining internal momentum.

Step two, quantify usable audience value

Subscriber count is not the buying metric. Usable audience is.

A creator can still be a fit after some audience loss or historical inflation if the current audience is active, relevant, and commercially credible. That is why a professional review should estimate what portion of the audience still matters for this campaign.

At this stage, score the creator on three questions:

| Review question | Why it matters |

|---|---|

| How much of the current audience appears reachable? | Budget follows likely impressions and responses, not headline scale |

| Does the audience match the campaign market? | Category fit and geography often matter more than raw size |

| Do engagement patterns support paid performance? | Consistent, believable interaction reduces execution risk |

Consistent, believable interaction reduces execution risk. Junior teams often slip in this area. They treat a suspicious signal as an automatic rejection, or a large subscriber number as automatic proof. Strong vetting handles trade-offs. A channel with moderate ratio weakness but strong market fit may be worth a hold. A large channel with multiple credibility issues usually is not.

Step three, centralize evidence so approvals are defensible

Scattered screenshots do not scale. They also fail the moment a client or finance lead asks why a creator was approved.

A centralized review record should include:

- Ratio calculations and screening notes

- Recent content performance

- Audience geography and demographic fit

- Manual review comments

- Approval, hold, or rejection status with reason codes

Teams that formalize this process with an influencer marketing platform can keep discovery, vetting, outreach, and reporting in one system. REACH helps by automating repetitive checks, storing review history, and giving every buyer the same decision framework.

Documentation matters for another reason. Good fraud prevention requires both filtering out bad partners and recording why approved creators met the standard. That audit trail gets more valuable as the team grows.

Step four, approve based on campaign risk tolerance

Every campaign does not need the same bar.

A product launch with tight performance targets should use stricter approval rules than a small awareness test. A regulated category, a premium price point, or a market-entry campaign usually justifies more caution. Set those thresholds before outreach starts, not after a favorite creator is already in discussion.

A practical decision model works like this:

Approve when audience quality, ratios, and fit support the fee.

Hold for review when the creator has clear market relevance but one or two signals need explanation.

Reject when weak ratios, poor interaction quality, and unexplained growth appear together.

Write down the reason every time. Over a few campaign cycles, that record becomes your team’s operating standard instead of tribal knowledge.

Step five, keep the review open after contracting

Approval is the start of risk management, not the end.

Track campaign delivery against the same logic used in vetting. If engagement quality drops, views detach from historical norms, or audience fit looks worse during execution, log it in REACH and use it in renewal decisions. Effective fraud prevention includes screening before the buy, monitoring during the campaign, and feeding results back into the next shortlist.

That closed-loop process is how teams protect budget at scale.

The Future is Authentic Building Partnerships That Last

A fake subscriber count is not only a media efficiency problem. It is a trust problem.

When brands keep rewarding inflated audiences, they train the market to optimize for appearances instead of outcomes. That hurts everyone involved. Brands lose money. Honest creators get underpriced. Agencies waste time defending weak campaign results that should have been prevented before launch.

Why authenticity wins over time

Authentic audiences behave differently.

They respond to content that fits the creator. They produce comments that sound like real people. They create enough consistency that a brand can forecast performance with some confidence.

That does not mean every creator with a smaller audience is automatically a better choice. It means a smaller, believable audience is often more useful than a large, questionable one.

The strongest long-term creator programs usually share a few habits:

- They reward fit over scale

- They review audience behavior before pricing

- They keep vetting standards documented

- They revisit creator quality after each campaign

What brands should stop doing

Some habits create fake subscriber count risk almost by default.

Stop doing things like:

- Shortlisting by subscriber count alone

- Treating one viral post as proof of creator quality

- Assuming large creators are safer by default

- Using a single percentage cutoff as the whole fraud policy

Those shortcuts feel efficient, but they produce weak creator portfolios. Good vetting is slower at the start and cheaper at the end.

A better standard for brand teams

Brand teams need a standard that junior marketers can follow without guesswork.

That standard should be simple:

Review the recent content. Check whether audience behavior looks human. Validate the key ratios. Make a decision based on audience usability, not only on follower totals. Document why the creator passed.

That approach does two important things. It protects budget now, and it builds a cleaner creator roster over time.

Key takeaway: The future of influencer marketing belongs to brands that can tell the difference between visible scale and usable influence.

A fake subscriber count will keep evolving because the incentive to look bigger is not going away. But the defense is already clear. Use manual observation to spot obvious problems. Use ratio analysis to verify audience health. Use a repeatable workflow so your team does not improvise quality control every time a creator looks promising.

The brands that do this well stop chasing empty numbers. They buy real attention instead.

If you want a faster way to discover creators, verify audience quality, manage outreach, and keep campaign decisions organized in one place, explore REACH. It helps brands and agencies turn influencer vetting from a loose manual task into a repeatable operating process that protects budget and supports better partnerships.